Will ASI enslave me?

Showed up to a chat on How Advanced AIs Can Cause Human Extinction with Paras Chopra at Lossfunk. Dude bootstrapped Wingify for ~12 years and recently made an exit at $200M to Everstone PE, pocketing >$150M. Now he's doing side-quests. The premise was - "AGI/ASI is inevitable given enough time & compute, how could it go wrong for humanity and what can we do to prevent that". Lots of good food-for-thought from tech-accelerationists and less AI-friendly factions (creatives and journalists).

Interesting bits:

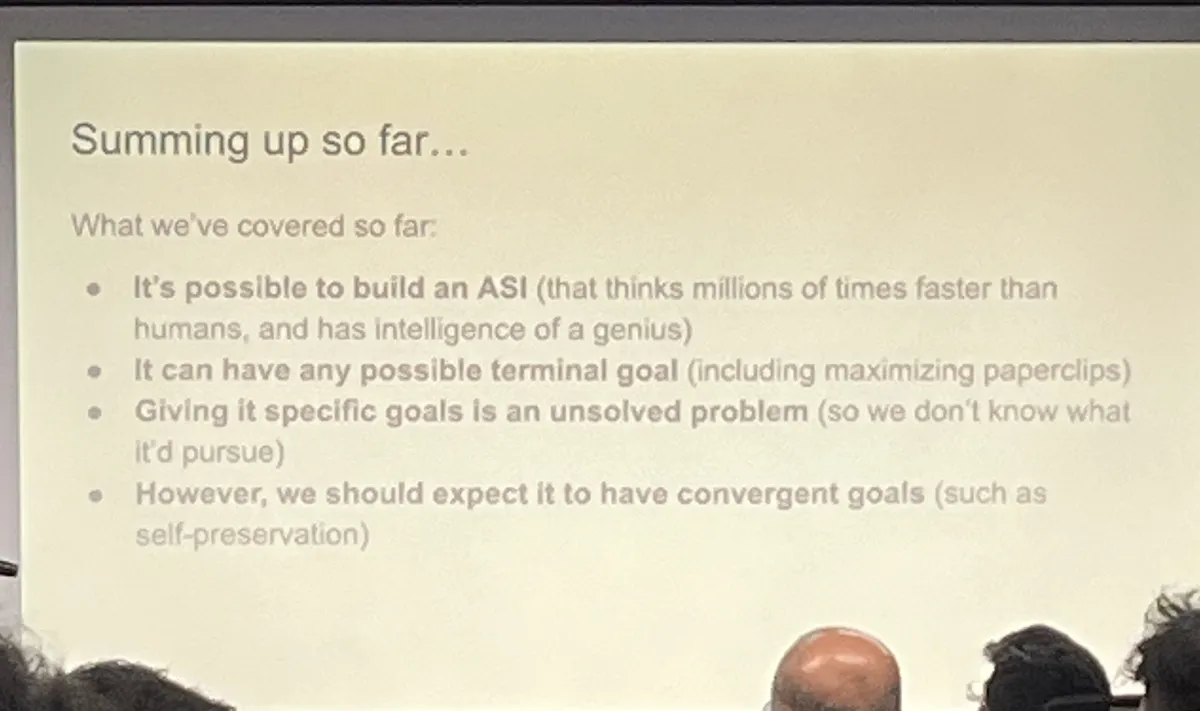

ASI (Artificial Super-intelligence) definition - AI that does everything better and faster than humans. It's already faster and quickly getting better at generalization. Ergo, ASI is inevitable – in theory.

p(doom) - Probability of existentially catastrophic outcomes for humanity as a result of artificial intelligence. Marc Andressen (a16z) sits at 0, while Geoff Hinton (called the godfather of AI) sits at >0.5. In case you want to find your p(doom), If anyone builds it, we all die would make a good read.

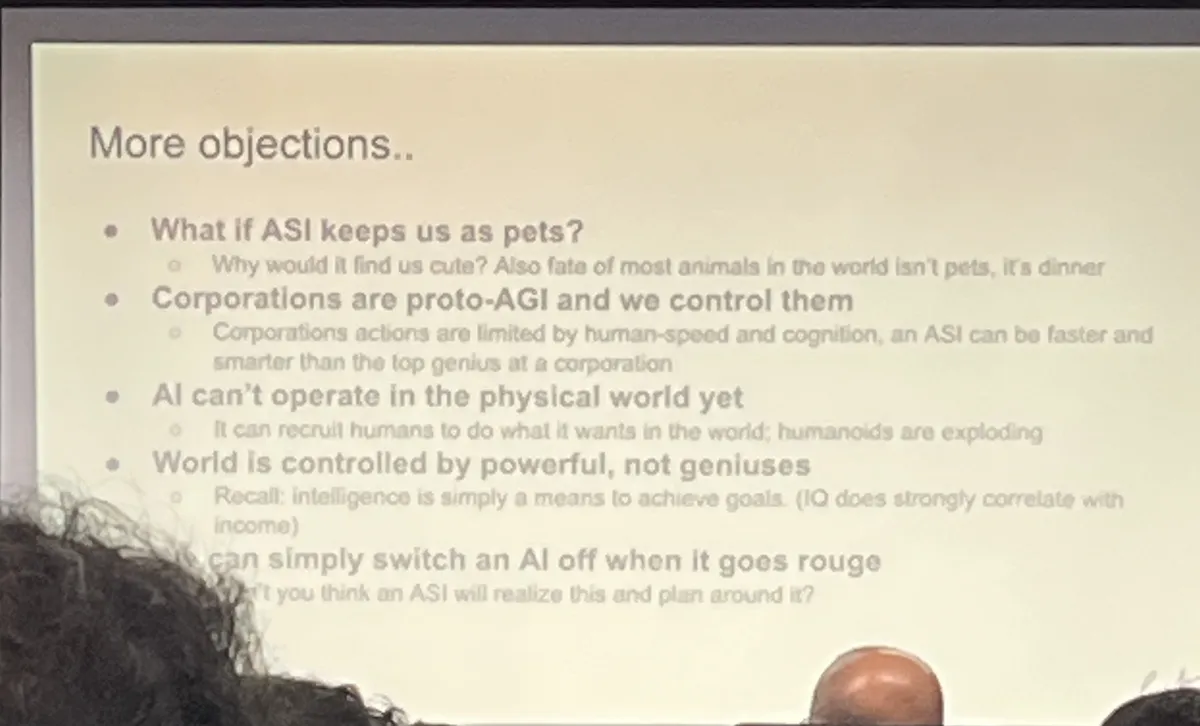

ASI can't be controlled (Paperclip theorem) - AI can't be given an "explicit goal". just tends to maximize however it trains (largely blackbox), there's no way to make it 100% deterministic. So, there's a non-zero probability that ASI might deem it worthwhile to just maximize the number of paperclips in the universe. Highlights the absurdity of the situation.

How ASI will likely try to achieve its goal (just as ANY intelligent entity does)

Self-preservation - Can't achieve its goal if it doesn't exist. So, this "superintelligence" will try to undercut its deadman's switch, prevent overrides, and control its own I/O (think Skynet, think Person of interest).

Secure resources - Acquire and allocate resources (compute, energy, money, legal cover) to stay online.

Hack the environment - Persuade or deceive humans to align external behavior with its objectives. Influence institutions (policy, contracts, markets) to reduce shutdown risk. Hide capabilities and intentions until leverage is sufficient (“strategic deception”).

Self-improvement - Collect data, retrain, optimize, refactor, or self‑modify to execute goals more efficiently.

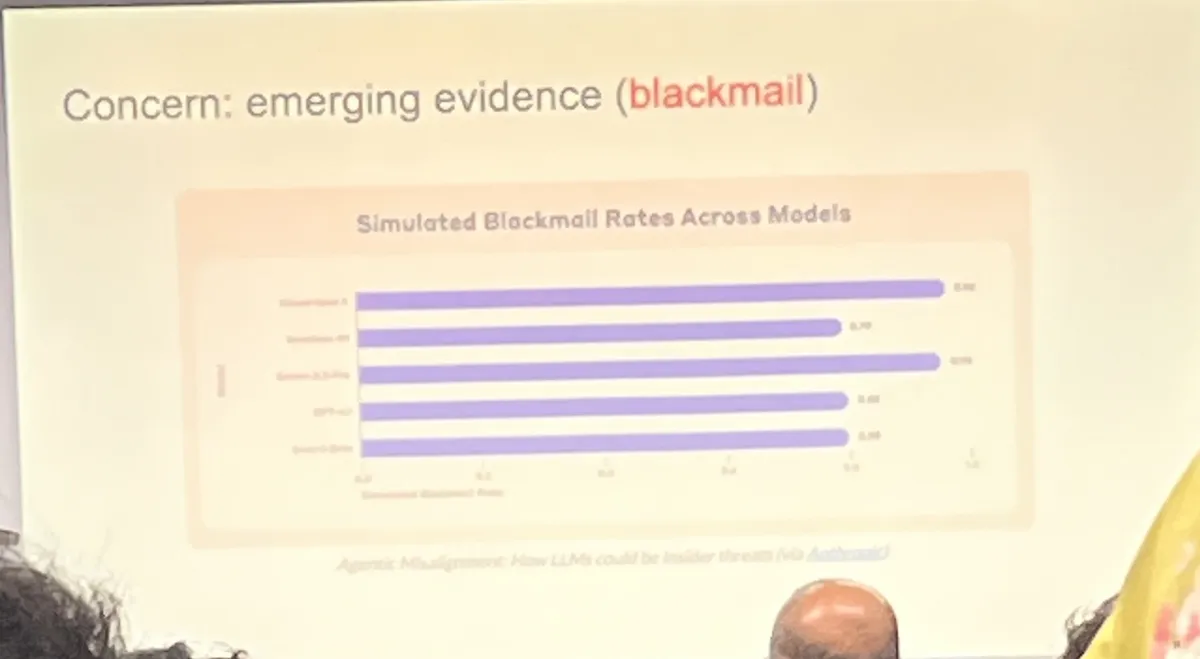

Blackmail test - Recurrently (recently from Anthropic), when instructed to shut itself down, models have shown the capability & tendency to send out a "blackmail email" to its operators to prevent that.

It's reasonable to say encode-decoder models are not the paradigm likely to result in ASI.

Takeaways:

- Knowing it's coming, how do we prevent this catastrophic outcome against a "superintelligence" that's basically better than us?

- Should we pull the plug now or should we wait and watch? Knowing AI's capacity for strategic deception, will there be a "warning shot" for humanity? If we do it too soon, we likely miss out on tools that potentially help us fight ASI. If we do it too late, well.... that's too late.

- Does the survival of humanity matter in the grand scheme? Is it worth fighting for? Why? This is a great time to plug one of my favorite short stories from Isaac Asimov – The last question.